AI Agents vs. AI Assistants: Understanding the Difference Before You Buy the Wrong Thing

Bryon Spahn

4/8/202620 min read

Tom had been the Director of IT at a regional logistics company for eleven years. He had survived two ERP migrations, a ransomware incident that nearly ended the company, and the transition from on-premises everything to a hybrid cloud environment that took four years and three vendors to get right. He was not easily rattled. But the meeting with his CEO on a Thursday morning in early spring rattled him.

"We need to deploy AI agents," the CEO said, sliding a printout across the conference table. It was a competitor's press release. The competitor — a similar-sized regional firm — had announced they were deploying "an AI agent platform" to automate dispatch, exception handling, and carrier communication. "Find out what this costs and how fast we can do it."

Tom spent the next three weeks in meetings with vendors, watching demos, and reading whitepapers. He had proposals for six different platforms ranging from $40,000 to $380,000 in first-year costs. Every vendor used the words "AI agent" prominently in their materials. And yet, when Tom dug into the demos, he noticed something troubling: some of these platforms responded to questions. Others actually did things. Some required a human to review and approve every action. Others operated autonomously across multiple systems without any human touchpoint at all. One vendor's "AI agent" was essentially a smarter search bar with a chat interface. Another's was a system that could monitor inbound carrier emails, identify exceptions, query the TMS, draft response communications, update records, and escalate unresolvable issues — all without a human in the loop.

Tom was looking at the same label on fundamentally different products. And he had no framework for evaluating which one his organization actually needed.

This scenario is playing out in boardrooms and IT departments across every industry. The term "AI agent" has become one of the most overloaded, misapplied, and commercially exploited phrases in the technology landscape today. Vendors apply it to everything from basic chatbots to fully autonomous multi-step workflow systems. Business leaders, under pressure to "deploy AI," are writing checks for capabilities they don't fully understand — and in many cases, buying the wrong thing entirely.

This article is a framework for getting it right. We will break down the genuine technical and strategic differences between AI assistants and AI agents, explore the full capability spectrum that exists between them, and give you the decision criteria you need to match the right AI capability to your specific business problem. Because in this market, understanding what you're actually buying isn't just due diligence — it's the difference between a competitive advantage and an expensive mistake.

The Vocabulary Problem Is Costing You Money

Before we establish the framework, we need to acknowledge the root cause of the confusion: the AI industry has a vocabulary problem, and it is being deliberately exploited by vendors who benefit from the ambiguity.

The word "agent" carries powerful connotations. In human contexts, an agent acts on your behalf. They have goals. They make decisions. They take initiative. When a vendor calls their product an "AI agent," they are borrowing all of that meaning — whether their product actually exhibits those properties or not.

The result is a market where a glorified FAQ bot and a fully autonomous multi-system orchestration platform carry the same label. Business leaders reading analyst reports, vendor materials, and technology press are consuming a steady stream of content that treats these wildly different capabilities as if they exist on the same tier. They don't.

The confusion has real financial consequences. Organizations over-invest in autonomous capabilities they aren't operationally ready to deploy safely. Others under-invest, purchasing assistant-tier tools when their use case demands agent-level autonomy. Many buy neither the right tool nor the right capability — they buy the most aggressively marketed one.

At Axial ARC, roughly 40% of the organizations we assess have foundational gaps that prevent them from safely deploying the AI capabilities they think they need. The vocabulary confusion is one of the primary drivers. Leaders can't make informed decisions about capabilities they can't accurately define. So let's define them.

What an AI Assistant Actually Is

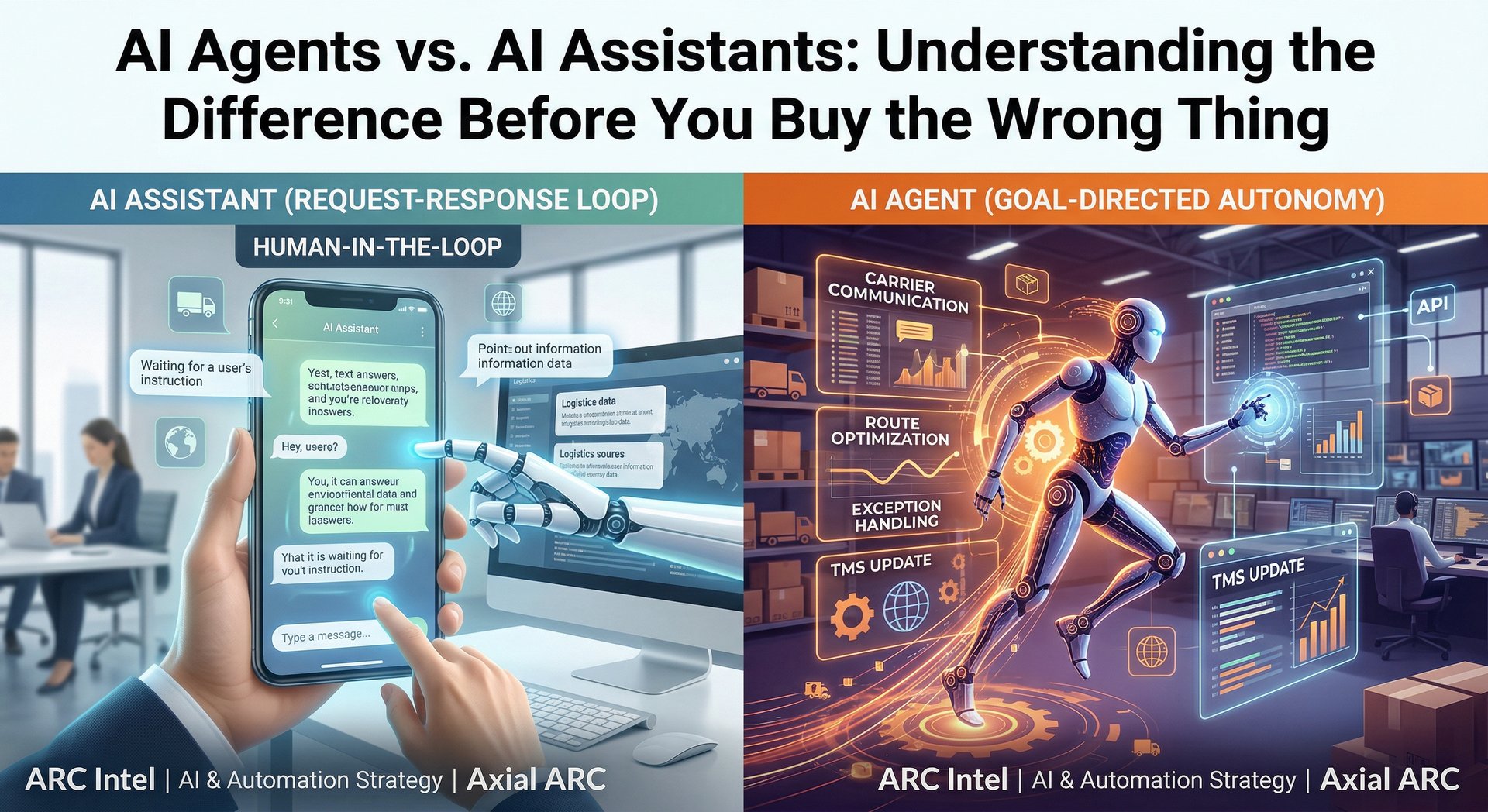

An AI assistant is a tool that responds to human-initiated requests, provides information, generates content, or offers recommendations — and then waits for a human to act on that output.

The defining characteristic of an AI assistant is that it operates within a request-response loop. A human provides input. The assistant processes it and generates output. The human decides what to do with that output. The assistant has no persistent state between interactions, no ability to initiate action on its own, and no access to external systems or tools beyond what the conversation interface allows.

Common examples include:

Generative AI chat interfaces like ChatGPT, Claude, Gemini, and Microsoft Copilot in their base configurations. You ask a question or provide a task. The model generates a response. You read it and decide whether to act on it.

AI-enhanced search and knowledge tools that allow employees to query internal knowledge bases in natural language. The system retrieves and synthesizes information. The employee decides what to do next.

AI writing and summarization tools embedded in productivity platforms. You paste a document and ask for a summary. The tool generates one. You review, edit, and use it.

AI recommendation engines that surface insights, flag anomalies in data, or suggest next actions within a dashboard. The system tells you something. You decide whether to act.

Notice the consistent pattern: the human is always in the decision seat. The assistant enhances human productivity by making information retrieval, content generation, and analysis faster and more accessible. But it does not act. It does not remember context across sessions (in most base configurations). It does not initiate workflows. It does not touch external systems.

This is not a criticism. For a wide range of business use cases, this is exactly the right capability. The mistake is assuming you need more — or less.

The Strengths of AI Assistants

Low operational risk. Because a human reviews every output before any action is taken, the blast radius of an error is contained. If the AI gives a wrong answer, the human catches it before it propagates through a business process.

Broad accessibility. AI assistant interfaces are generally intuitive enough that a non-technical user can derive value quickly. The learning curve is shallow. The productivity gains can be immediate.

Flexibility across domains. A single AI assistant platform can support a marketing manager drafting campaign copy, a financial analyst summarizing earnings reports, a customer service rep preparing responses, and an HR leader drafting job descriptions — all in the same tool.

Minimal integration requirements. Because the assistant doesn't need to connect to and act on external systems, deployment typically doesn't require deep technical integration work. The operational overhead is low.

Alignment with human-in-the-loop processes. In highly regulated industries, sensitive business contexts, or high-stakes decision environments, maintaining a human review step for every AI output isn't a limitation — it's a compliance and risk management requirement.

The Limitations of AI Assistants

They don't execute. An AI assistant can draft a purchase order, but a human still has to submit it. It can identify a scheduling conflict, but a human still has to resolve it. For processes that involve dozens of routine, repetitive execution steps, the productivity ceiling is meaningful.

They don't persist context by default. Most base AI assistant implementations don't carry memory across sessions. Every conversation starts fresh. This creates friction in workflows where continuity of context would drive significant value.

They don't initiate. An AI assistant won't notice that your inventory level crossed a reorder threshold and begin a procurement workflow. It only knows what you tell it in the current session.

They don't orchestrate. Complex workflows that span multiple systems — pulling data from a CRM, cross-referencing it against an ERP, generating a communication, logging the interaction, and scheduling a follow-up — require coordination across tools that an assistant-tier product cannot perform.

Understanding these limitations isn't about dismissing AI assistants. It's about being clear-eyed: if your use case requires autonomous execution, multi-system coordination, or proactive initiation, an assistant won't deliver the ROI you're expecting.

What an AI Agent Actually Is

An AI agent is a system that perceives its environment, reasons toward a goal, takes action using tools and integrations, and adjusts its behavior based on the outcomes of those actions — with varying degrees of human oversight.

The defining characteristic of an AI agent is autonomy over a workflow or task sequence. An agent isn't waiting for your next prompt. It is working toward an objective. It has access to tools — APIs, databases, communication systems, web interfaces, internal applications — and it uses those tools to execute multi-step processes.

Where an assistant responds, an agent acts.

True AI agents exhibit several properties that distinguish them from assistants:

Goal-directedness. An agent is given an objective, not just a prompt. "Monitor all inbound customer escalation emails, classify them by urgency and category, route them to the appropriate team queue, draft an initial acknowledgment response, and flag any that reference legal or compliance language for immediate human review" is an agent objective. It defines a desired outcome across multiple steps and systems.

Tool use. Agents are connected to external systems and can invoke APIs, read from and write to databases, browse the web, execute code, send communications, and interact with business applications. Their capability is bounded by what tools they have access to — not by what they can generate in a text response.

Memory and context persistence. Agents maintain state across steps in a workflow and, in more sophisticated implementations, across sessions. They know what they've already done, what they're doing next, and what they've learned from prior interactions.

Multi-step reasoning. Agents plan sequences of actions to achieve goals. They can evaluate intermediate outcomes, adjust their approach based on what they observe, and decide when to escalate to a human based on criteria defined in their configuration.

Autonomy calibration. Well-architected agents include explicit human-in-the-loop checkpoints for high-stakes decisions, ambiguous situations, or defined exception types. The degree of autonomy isn't a binary — it's a configurable design parameter.

Common examples of true AI agents in deployment today:

Autonomous customer service agents that handle the full lifecycle of a routine inquiry — querying account status, processing a refund, updating a record, sending a confirmation, and closing the ticket — without a human touchpoint until an exception is encountered.

Sales development agents that monitor defined trigger signals (new funding announcements, leadership changes, job postings indicating tech stack investment), enrich prospect records via integrated data sources, draft personalized outreach, queue it for human review or send autonomously, and log all activity back to the CRM.

IT operations agents that monitor infrastructure telemetry, detect anomalies, execute predefined remediation playbooks (restarting services, scaling resources, isolating compromised endpoints), and generate incident reports — escalating to on-call engineers only when automated remediation fails or the severity threshold is crossed.

Financial reconciliation agents that pull transaction data from multiple systems, apply matching logic, flag discrepancies, generate exception reports, and route unresolvable items to the appropriate human reviewer — compressing what was a multi-day manual process to minutes.

Content operations agents that take a single long-form piece of content and autonomously reformat it for multiple channels, post according to a defined schedule, monitor engagement metrics, and surface performance summaries to the marketing team — without requiring a human to manage each step.

The ACTOR Framework: Five Dimensions That Separate Assistants from Agents

To cut through vendor marketing and evaluate any AI tool with clarity, we use what we call the ACTOR framework — five dimensions that together define where any given AI capability actually sits on the spectrum from assistant to agent.

A — Autonomy Level

The most fundamental distinction. Does the system require a human to initiate every action, or can it initiate actions on its own based on defined triggers or ongoing monitoring?

Assistant tier: Every action is human-initiated. The system responds to prompts. It does not operate between human interactions.

Agent tier: The system operates toward a goal, initiating steps based on its own perception of the environment, the state of a workflow, or defined trigger conditions. It acts between human interactions.

When evaluating a vendor's product, the autonomy question is simple: "Can this system take an action in an external system without a human pressing a button?" If the answer is no, you are looking at an assistant regardless of what the vendor calls it.

C — Context Retention

Does the system maintain memory and state across steps in a workflow, across sessions, and across the lifespan of a task?

Assistant tier: Stateless or limited to a single conversation session. Each interaction begins without knowledge of prior interactions unless explicitly provided.

Agent tier: Maintains persistent memory of workflow state, prior actions, and accumulated context. A well-designed agent knows it sent an email two hours ago, that the recipient hasn't responded, and that its configured next step is to try an alternate communication channel.

Context retention is what enables agents to manage long-horizon tasks — workflows that unfold over hours, days, or weeks — rather than just responding to moment-in-time requests.

T — Tool Access

What external systems, APIs, and capabilities can the AI invoke directly?

Assistant tier: Output is contained within the conversation interface. The system generates text, images, or structured data — but cannot act on external systems directly.

Agent tier: Connected to external systems via APIs, database connections, web browsers, code execution environments, or native integrations. The agent can read from and write to these systems as part of executing its workflow.

Tool access is the implementation layer that transforms AI reasoning capability into business process automation. An agent with sophisticated reasoning but limited tool access is constrained in the value it can deliver. The breadth and depth of tool integration is one of the most important architectural decisions in any agent deployment.

O — Orchestration Capability

Can the system coordinate multiple tools, sub-tasks, or sub-agents in sequence or in parallel to complete a complex objective?

Assistant tier: Single-task, single-response. The system handles one request at a time without the ability to decompose a complex goal into coordinated sub-tasks.

Agent tier: Capable of decomposing a high-level goal into a sequence of coordinated actions, invoking different tools for different steps, and managing the handoffs between them. In multi-agent architectures, an orchestrating agent can delegate sub-tasks to specialized agents and synthesize their outputs.

Orchestration capability is what enables agents to handle genuinely complex workflows — the kind that currently require a human coordinator to manage multiple systems, track status across steps, and route exceptions. When a vendor claims their system can "automate your entire [process]," the question that reveals orchestration capability is: "Walk me through every system it touches and every decision it makes between the first trigger and the final output."

R — Rollback and Human Override

How is human oversight implemented, and what happens when the agent encounters a situation outside its defined parameters?

Assistant tier: Human oversight is inherent to the architecture — every output is reviewed before any action occurs. There is nothing to roll back because the system hasn't acted.

Agent tier: Human oversight must be deliberately designed in. A well-architected agent has explicit escalation criteria, human-in-the-loop checkpoints for high-stakes or ambiguous decisions, audit logging of every action taken, and the ability for a human to pause, redirect, or reverse agent activity.

This dimension is the most important one from a risk management perspective, and it is the one most commonly underspecified in vendor demonstrations. Ask every AI agent vendor: "What happens when the agent encounters a scenario outside its training or configuration?" and "Show me the audit log of an agent run." The answers will tell you more about real-world deployability than any feature sheet.

The Capability Spectrum: It's Not Binary

One of the most important things to understand is that "assistant" and "agent" are not two discrete boxes — they are endpoints on a continuous spectrum. Most of the interesting and practically useful AI deployments today sit somewhere in the middle.

Tier 1 — Pure Assistants: Stateless, prompt-driven, no tool access, no external system integration. Output is text or structured data reviewed entirely by a human before any action. Examples: base ChatGPT, Claude, Gemini chat interfaces.

Tier 2 — Enhanced Assistants: Stateless or session-persistent, prompt-driven, with read-only access to connected data sources. Can retrieve and synthesize information from internal systems but cannot write back or take action. Examples: RAG-based internal knowledge tools, AI-enhanced dashboards with natural language querying.

Tier 3 — Supervised Agents: Can take actions in external systems but requires explicit human approval for each action or action batch. Autonomy is extended to planning and drafting, but execution requires human sign-off. Examples: AI tools that draft emails or update records and queue them for human review before sending or saving.

Tier 4 — Constrained Autonomous Agents: Operates autonomously within a well-defined, bounded workflow with human-in-the-loop checkpoints at defined exception or escalation criteria. Takes action independently for routine cases, escalates non-routine ones. Examples: AI-driven customer service systems that resolve standard inquiries autonomously and escalate complex or sensitive cases.

Tier 5 — Fully Autonomous Agents: Operates end-to-end toward a high-level goal with minimal human interaction. May coordinate sub-agents, manage long-horizon workflows, and adapt its approach based on environmental feedback. Human involvement is limited to goal-setting, periodic review, and exceptional escalations. Examples: advanced AI operations platforms in mature deployments.

Understanding where on this spectrum your use case actually needs to sit is the most important calibration step in any AI deployment decision. The mistake we see most often is organizations buying Tier 5 capability for a Tier 2 or 3 use case — or buying Tier 2 capability because it's the most accessible, when their use case requires Tier 4 to deliver meaningful ROI.

Why Buying the Wrong Tier Is So Common

The mismatch between organizational AI capability tier and purchased AI capability tier isn't random. There are structural reasons it happens consistently, and understanding them helps you avoid the trap.

Reason 1: Vendor Incentives Favor Upward Confusion

Vendors at the agent end of the spectrum command significantly higher contract values than vendors at the assistant end. There is a strong commercial incentive to position any AI product as "agentic" regardless of its actual capability tier. The terminology ambiguity we described earlier is not accidental — it is, in many cases, a marketing strategy.

A vendor who calls their supervised agent platform an "autonomous AI agent" is not necessarily lying. They are exploiting the fact that most buyers don't have a framework for calling them on it.

Reason 2: Demos Are Optimized Scenarios

AI vendor demonstrations are choreographed for the best-case scenario. The demo environment is pre-configured with clean data, ideal integrations, and a workflow that happens to work perfectly. The exception handling, the failure modes, the real-world messiness of actual business data — none of that appears in the 45-minute Zoom demo.

The gap between "what the demo showed" and "what the deployment actually delivered" is one of the most common sources of post-purchase disappointment in enterprise AI. The ACTOR framework gives you a script for the demo: ask the vendor to demonstrate each dimension explicitly, in conditions that include exceptions and edge cases.

Reason 3: Organizational Readiness Is Consistently Overestimated

Fully autonomous agent deployments require high-quality, well-integrated data, mature process documentation, explicit human-oversight protocols, and organizational change management capability. Most SMB and mid-market organizations are not there yet — not because they lack ambition, but because they haven't invested in the foundational infrastructure that agents depend on.

At Axial ARC, we frequently see organizations attempt to deploy Tier 4 or 5 agent capability on top of Tier 1 or 2 data infrastructure. The result is an expensive deployment that underperforms, an executive team that loses confidence in AI investment, and a setback for the organization's overall technology modernization journey. The foundational work isn't glamorous. But it is the difference between an agent that actually runs and an agent that runs in the demo.

Reason 4: The Competitive Pressure Distorts Judgment

Nobody wants to be the organization that was late to AI. When a competitor announces an AI agent deployment — as in Tom's situation at the logistics company — the impulse is to respond in kind, as fast as possible, at whatever cost. This is exactly the environment in which poor technology decisions get made.

Speed and competitive response are legitimate business pressures. But the organizations that will sustain competitive advantage from AI aren't the ones who deployed something first — they're the ones who deployed the right thing, in the right context, with the right infrastructure underneath it. The companies that rushed underprepared AI deployments in 2023 and 2024 are in many cases quietly walking them back today.

How to Match the Right Capability to the Right Use Case

The practical application of everything above is a structured use case evaluation process. Before any conversation with a vendor, before any RFP, before any budget discussion, the following questions need clear answers.

Define the workflow precisely. Write out every step of the process you want AI to improve — not at a high level, but at the level of granular actions. Who does what, in what system, triggered by what event, and what decisions are made at each step? Vague process descriptions produce vague AI requirements.

Identify the human touchpoints. Where in the current workflow does a human make a decision that requires judgment, contextual knowledge, or accountability? These are your human-in-the-loop anchors. Some of them should remain human touchpoints in the AI-enabled version. Identifying them up front shapes your tier requirement.

Assess your data quality and integration landscape. Agents are only as good as the data they have access to and the integrations they can invoke. Before evaluating agent-tier capabilities, be honest about the state of your data: Is it clean, consistent, and accessible? Are your key business systems API-accessible? Is there a defined data model that an agent can rely on?

Determine acceptable autonomy and risk tolerance. For each step in the workflow, ask: "What is the cost of an error here?" Steps with low error cost and high volume are candidates for autonomous execution. Steps with high error cost or significant business consequence are candidates for human review requirements. This mapping directly informs your tier selection.

Define success metrics before you buy. What does a successful deployment look like in measurable terms? Reduction in processing time? Decrease in error rate? Improvement in customer response time? Cost savings? Defining these metrics in advance forces specificity in your use case definition and gives you the benchmarks to evaluate actual deployment performance.

Three Scenarios: Applying the Framework

Scenario 1: The Professional Services Firm Evaluating AI for Proposal Generation

A 75-person management consulting firm wants to use AI to accelerate the creation of client proposals. Currently, senior consultants spend six to ten hours per proposal pulling from prior engagements, adapting templates, and drafting new sections.

Right capability tier: Tier 2 to Tier 3 — Enhanced Assistant or Supervised Agent.

Why: The value here is in accelerating human work, not replacing human judgment. Proposal quality depends on consultant expertise, client relationship context, and nuanced positioning decisions that a human is best equipped to make. An AI that can retrieve relevant prior engagement content, draft initial sections based on templates and retrieved context, and surface relevant case studies dramatically reduces the time burden while keeping the consultant in the creative and judgment seat. Full autonomy in proposal drafting would produce mediocre proposals and would create client relationship risk.

What to avoid: Vendors selling "autonomous proposal generation agents" for this use case. The automation value is in the retrieval and drafting acceleration, not in the removal of human judgment from the process.

Scenario 2: The Regional HVAC Company Managing Service Scheduling and Dispatch

A 12-location HVAC service company receives 200-400 service requests per day across residential and commercial accounts. Scheduling coordinators are overwhelmed during peak seasons, and response time variability is hurting customer satisfaction scores.

Right capability tier: Tier 4 — Constrained Autonomous Agent.

Why: Routine scheduling logic — matching technician skills and location to job type and customer location, checking parts availability, generating dispatch orders, sending customer confirmations — is highly structured and rule-governed. The business logic is well-defined. The data is clean and accessible. The error tolerance for routine scheduling decisions is high (a schedule can be adjusted if it's wrong). Human involvement should be reserved for escalations: same-day commercial emergencies, scheduling conflicts the system can't resolve, customer escalation calls.

What to avoid: A Tier 2 assistant that still requires a coordinator to review and approve every schedule. The volume doesn't support a supervised workflow. And a Tier 5 fully autonomous system that tries to handle escalations and exceptions without human oversight creates customer relationship and service delivery risk.

Scenario 3: The Financial Services Firm Evaluating AI for Compliance Monitoring

A regional broker-dealer needs to improve its surveillance of client communication for potential compliance violations. Currently, a compliance team manually reviews a sample of emails and call recordings.

Right capability tier: Tier 3 — Supervised Agent.

Why: AI can dramatically increase the coverage of communication surveillance — processing 100% of communications rather than a sample, flagging potential violations for human review, and prioritizing the human reviewer's attention on the highest-risk items. But the decision about whether something is actually a violation, and what action to take, must remain with a human compliance officer. Regulatory exposure, legal liability, and the nuanced judgment required to distinguish a genuine violation from benign language that superficially resembles one all argue for a firm human-in-the-loop requirement on consequential decisions.

What to avoid: Any configuration that allows the AI to take action on flagged communications — blocking a message, generating a regulatory report, notifying a supervisor — without human review. In regulated environments, autonomous execution of compliance decisions creates more regulatory risk than it mitigates.

The Honest Conversation About Organizational Readiness

Here is where we tell you something that many technology vendors won't: deploying agent-tier AI capability is not primarily a technology problem. It is an organizational readiness problem.

We have assessed organizations that had the budget, the vendor selection, and the executive mandate for agent deployment — and had to advise them to delay. Not because the technology wasn't available, but because the foundations weren't there.

Data readiness is the most common gap. Agents operate on data. If that data is inconsistent across systems, trapped in formats that aren't API-accessible, or governed by policies that restrict the integrations an agent needs, the agent cannot function as designed. Before you buy an agent platform, audit the data it will depend on.

Process documentation is the second most common gap. Agents execute processes. If the process isn't documented clearly enough that you could hand it to a new employee on their first day with full confidence they'd execute it correctly, it isn't documented clearly enough for an agent. Agents don't infer process from organizational culture. They execute from defined logic.

Human oversight protocols are frequently underdeveloped. Organizations that are accustomed to having a human in every workflow step sometimes struggle to define where human oversight should be preserved when parts of the workflow become autonomous. Getting this wrong in either direction is costly: over-supervising defeats the efficiency purpose; under-supervising creates operational and compliance risk.

Change management capability is consistently underestimated. AI agent deployment changes how people work. Roles shift. Some tasks disappear. New tasks emerge — primarily around oversight, exception handling, and continuous improvement of the agent's performance. Organizations that deploy AI without a plan for managing those transitions consistently see lower adoption, higher attrition among affected employees, and poorer agent performance because the human feedback loops that improve agents over time aren't being tended.

We are not raising these challenges to discourage AI investment. We raise them because organizations that deploy AI on top of weak foundations don't just fail to capture value — they develop institutional skepticism about AI that sets them back years. Getting the foundations right first is the fastest path to sustainable competitive advantage from AI.

What to Ask Every AI Vendor

Armed with the ACTOR framework and a clear use case definition, the following questions will expose the real capability tier of any AI product in any vendor conversation:

On Autonomy: "Without any human prompting, what actions can this system initiate on its own? Show me an example." A vendor who struggles to answer this clearly has an assistant, not an agent.

On Context Retention: "If I start a task today and don't finish it, what does the system remember about that task tomorrow? Walk me through how state is persisted." Vague answers here reveal limited context capability.

On Tool Access: "What external systems can this product write to — not just read from — as part of its operation? Can you show me the integration architecture?" Read-only access to data is a materially different capability from read-write action execution.

On Orchestration: "For a complex, multi-step workflow, show me how the system decomposes the goal, sequences the steps, and handles a failure at step three." Polished demos of happy paths are not demonstrations of orchestration capability.

On Rollback and Human Override: "Show me the audit log from that demo run. Show me what happens when the system encounters a scenario outside its training. How does a human pause, redirect, or reverse an agent mid-run?" If the vendor doesn't have a strong, specific answer to this question, the human oversight architecture hasn't been built with sufficient maturity.

The Bottom Line

The AI landscape is generating extraordinary amounts of noise, inflated claims, and commercially motivated confusion. In that environment, the organizations that win aren't the ones who chase the most aggressive AI narratives — they're the ones who develop the clearest thinking about what they actually need.

AI assistants are powerful productivity tools that have already demonstrated meaningful ROI across a wide range of business functions. They are accessible, relatively low-risk, and deployable without the deep foundational infrastructure that agent-tier systems require. For many organizations and many use cases, they are exactly the right investment today.

AI agents are a genuinely transformative capability when deployed in the right context, on the right foundations, with the right human oversight architecture. They can compress multi-day manual processes into minutes, eliminate entire categories of routine coordination work, and create compounding operational advantages. But they are not the right answer for every use case, and they are not safely deployable in every organization today.

The decision between them — and between the tiers in the middle — comes down to five questions: How much autonomy does your use case actually require? What does your data infrastructure actually support? What human touchpoints must be preserved? What are the real costs of an error? And is your organization ready to manage the change that comes with removing humans from steps they currently own?

Tom, the logistics IT Director, eventually found his answer. It wasn't the $380,000 platform. It wasn't the $40,000 chatbot dressed up in agent clothing. It was a Tier 4 constrained autonomous agent for carrier exception handling — a $95,000 implementation on a platform that passed every dimension of the ACTOR framework — built on top of three months of data cleanup and process documentation work that nobody had told him he needed to do first.

Six months after go-live, his team had eliminated 1,200 manual exception-handling steps per week. His CEO stopped asking about the competitor's press release.

The right AI capability, for the right use case, on the right foundations. That's the whole game.

How Axial ARC Helps

Axial ARC's AI & Automation practice helps business and technology leaders cut through the noise and make AI capability decisions grounded in operational reality rather than vendor narratives.

Our engagements typically begin with a structured AI readiness assessment — evaluating your data infrastructure, process documentation maturity, integration landscape, and organizational change capacity. We assess before we recommend. In roughly 40% of cases, we tell organizations that they're not ready for the capability tier they're pursuing — and we help them build the foundations that make the advanced deployment genuinely possible instead of just technically installed.

We are not aligned to any AI vendor platform. Our recommendations are based on your use case, your constraints, and your readiness — not on reseller margins or preferred vendor relationships.

If you're navigating an AI platform decision, evaluating a vendor proposal, or trying to build a coherent AI strategy in an environment full of conflicting signals, we'd welcome the conversation.

Committed to Value

Unlock your technology's full potential with Axial ARC

We are a Proud Veteran Owned business

Join our Mailing List

EMAIL: info@axialarc.com

TEL: +1 (813)-330-0473

© 2026 AXIAL ARC - All rights reserved.